To start off, if you haven't heard of, or used extreme search in Splunk, here is some documentation. Extreme search is meant to provide dynamic thresholds and context awareness, when doing searches for IR, key indicators, and more.

Let's go over how to use a build own context and concepts. A "concept" is an idea represented by a concept. What this an mean for example is Very Low, Low, Normal, High, and Extreme. So instead of saying, in a search, > n you can say "is above High" to define your search. A "Context" is a collection of terms that form a view of knowledge.

One of the commands I like a lot is xsCreateDDContext (side note if you search for this you get 2 results... this article will make 3). The xsCreateDDContext builds a data driven context which contains concepts based on a variety of methods of mapping qualitative semantics to the data.

Here is a simple example of this. You can create rolling statistical analysis of our windows security events and create a standard deviation chart for each event code by computer.

example of this could be the following:

index=winevents source="WinEventLog:Security" earliest=-30d@d latest=-1d@d | fields _time, ComputerName, EventCode | bin span=1d _time |stats count as Event_Code_Count by ComputerName, EventCode | `context_stats(Event_Code_Count,EventCode)` |eval min=0 | xsCreateDDContext name=Event_Code_Count_by_ComputerName_1d type=domain terms=`xs_default_magnitude_concepts` class=EventCode scope=app It checks your windows security events over the last 30, days then takes the time, computername, and eventcode. then it will do stats on these, with buckets of 1 day spans and count. From there, the context_stats goes through and does just your standard deviation charts, based on the event code count and the event code. This will provide a lot of statistics. Since personally I want the min to be 0, I am overriding the statistics to be 0. From there, I am going to create the data driven context. Do a domain type and define the class and the terms.

Define more terms because Splunks documentation is bad.

type: can be one of two things

average_centered - Centers the context on the average and uses size. to scale term sizes

domain - the default, centers the set on (max-min)/2 and scales the context to cover the whole domain

class: a comma separated list of classes

We could provide all the event codes that windows could generate or, as I did above, just populate the list from the field, which has some downsides but is easier.

Now that we created this context, let's view it.

|xsDisplayContext Event_Code_Count_by_ComputerName_1d by 517

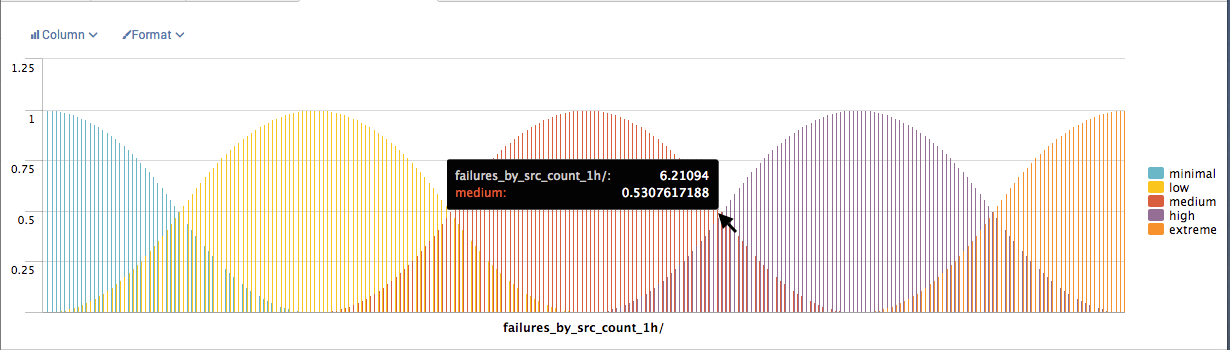

We should see a chart that looks something like this (this is just an example, not what above code would generate)

This is great; and we can use this information in other searches like IR searches to create alerts for security analysts, when something goes to the extreme, for your specific organization.

Here is how to create an IR type of search.

index=winevents source="WinEventLog:Security" |fields _time, ComputerName, EventCode | bin span=1d _time |stats count as Event_Code_Count by ComputerName, EventCode| xsWhere Event_Code_Count FROM Event_Code_Count_by_ComputerName_1d by EventCode is above HighThis search pulls up a day worth of logs and searches for ComputerNames that have abnormally extreme amount of a particular Security EventCode. Since this is abnormal for your Organizations Environment, over the last 30 days, you will probably want to find out what is causing it. Is it just a new software that is failing? Or is it because of a compromise; or is it just a bug in the system?

Extreme search allows you to leverage simple statistical analysis, and make it more dynamic and tuned for your organizations environment.

Happy Hunting,

Local Tech Repair Admin

PS:

Please comment with questions and I can answer where I can. I am a certified Splunk admin, but I do not work for Splunk so if you have detailed questions it may be best to ask Splunk support. If you learn something useful, please let the community know, so we can all learn to build better defenses.

I will probably write an article on how to leverage Machine Learning for your environment, to better detect security anomalies and leverage it for threat hunting.

Hi, good article, thanks for sharing!

ReplyDeletebtw. you've got a typo here in "ComptuerName":

|xsDisplayContext Event_Code_Count_by_ComptuerName_1d by 517

What is 517 ?

you are correct that is an error.

Deletethe event code 517 for audit log being cleared

https://www.ultimatewindowssecurity.com/securitylog/encyclopedia/event.aspx?eventid=517